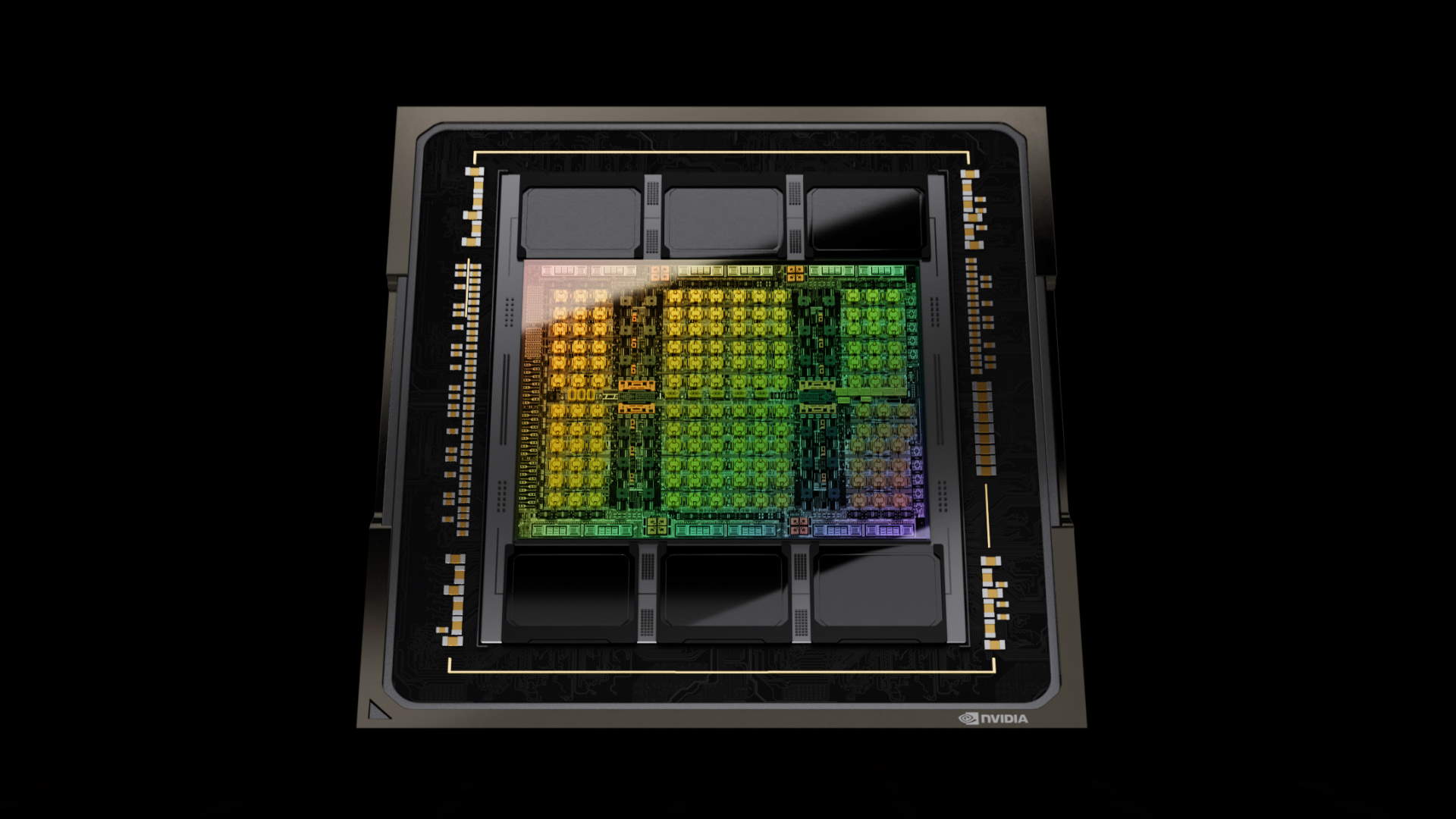

Nvidia’s AI is helping its engineers to bring better AI to the market faster

As you’re probably aware, there’s an insatiable demand for AI and the chips it needs to run on. So much so, Nvidia is now the world’s sixth largest company by market capitalization, at $1.73 trillion dollars at the time of writing. It’s showing few signs of slowing down, as even Nvidia is struggling to meet demand in this brave new AI world. The money printer goes brrrr.

In order to streamline the design of its AI chips and improve productivity, Nvidia has developed a Large Language Model (LLM) it calls ChipNeMo. It essentially harvests data from Nvidia’s internal architectural information, documents and code to give it an understanding of most of its internal processes. It’s an adaptation of Meta’s Llama 2 LLM.

It was first unveiled in October 2023 and according to the Wall Street Journal (via Business Insider), feedback has been promising so far. Reportedly, the system has proven useful for training junior engineers, allowing them to access data, notes and information via its chatbot.

By having its own internal AI chatbot, data is able to be parsed quickly, saving a lot of time by negating the need to use traditional methods like email or instant messaging to access certain data and information. Given the time it can take for a response to an email, let alone across different facilities and time zones, this method is surely delivering a welcome boost to productivity.

Nvidia is forced to fight for access to the best semiconductor nodes. It’s not the only one opening the chequebooks for access to TSMC’s cutting edge nodes. As demand soars, Nvidia is struggling to make enough chips. So, why buy two when you can do the same work with one? That goes a long way to understanding why Nvidia is trying to speed up its own internal processes. Every minute saved adds up, helping it to bring faster products to market sooner.

Things like semiconductor designing and code development are great fits for AI LLMs. They’re able to parse data quickly, and perform time consuming tasks like debugging and even simulations.

I mentioned Meta earlier. According to Mark Zuckerberg (via The Verge), Meta may have a stockpile of 600,000 GPUs by the end of 2024. That’s a lot of silicon, and Meta is just one company. Throw the likes of Google, Microsoft and Amazon into the mix and it’s easy to see why Nvidia wants to bring its products to market sooner. There’s mountains of money to made.

Big tech aside, we’re a long way from fully realizing the uses of edge based AI in our own home systems. One can imagine AI that designs better AI hardware and software is only going to become more important and prevalent. Slightly scary, that.